Yesterday I’ve got a weird problem with Adaptive Cursor Sharing. I’m not sure yet about the issue, but it seems to be related to cursor sharing histograms. Hopefully one day I will blog about what I’ve learnt from this experience.

To better monitor the problem on that specific query, I’ve prepared this script (tested on 12.1.0.2):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 |

COLUMN Shareable HEADING 'S|H|A|R|E|A|B|L|E' COLUMN "Bind-Aware" HEADING 'B|I|N|D| |A|W|A|R|E' COLUMN Sensitive HEADING 'S|E|N|S|I|T|I|V|E' COLUMN Reoptimizable HEADING 'R|E|O|P|T|I|M|I|Z|A|B|L|E' BREAK on child_number on Execs on "Gets/Exec" on "Ela/Exec" on "Sensitive" on "Shareable" on "Bind-Aware" on bucket0 on bucket1 on bucket2 on cnt on "Reoptimizable" on is_resolved_adaptive_plan select * from (select * from ( select s.child_number, s.plan_hash_value, executions as Execs, round(buffer_gets/executions) as "Gets/Exec", round(elapsed_time/executions) as "Ela/Exec", is_bind_sensitive as "Sensitive", is_shareable as "Shareable", is_bind_aware as "Bind-Aware", to_char(h.bucket_id) as bucket, h.count as cnt, is_reoptimizable as "Reoptimizable", is_resolved_adaptive_plan, "UNBOUND_CURSOR", "SQL_TYPE_MISMATCH", "OPTIMIZER_MISMATCH", "OUTLINE_MISMATCH", "STATS_ROW_MISMATCH", "LITERAL_MISMATCH", "FORCE_HARD_PARSE", "EXPLAIN_PLAN_CURSOR", "BUFFERED_DML_MISMATCH", "PDML_ENV_MISMATCH", "INST_DRTLD_MISMATCH", "SLAVE_QC_MISMATCH", "TYPECHECK_MISMATCH", "AUTH_CHECK_MISMATCH", "BIND_MISMATCH", "DESCRIBE_MISMATCH", "LANGUAGE_MISMATCH", "TRANSLATION_MISMATCH", "BIND_EQUIV_FAILURE", "INSUFF_PRIVS", "INSUFF_PRIVS_REM", "REMOTE_TRANS_MISMATCH", "LOGMINER_SESSION_MISMATCH", "INCOMP_LTRL_MISMATCH", "OVERLAP_TIME_MISMATCH", "EDITION_MISMATCH", "MV_QUERY_GEN_MISMATCH", "USER_BIND_PEEK_MISMATCH", "TYPCHK_DEP_MISMATCH", "NO_TRIGGER_MISMATCH", "FLASHBACK_CURSOR", "ANYDATA_TRANSFORMATION", "PDDL_ENV_MISMATCH", "TOP_LEVEL_RPI_CURSOR", "DIFFERENT_LONG_LENGTH", "LOGICAL_STANDBY_APPLY", "DIFF_CALL_DURN", "BIND_UACS_DIFF", "PLSQL_CMP_SWITCHS_DIFF", "CURSOR_PARTS_MISMATCH", "STB_OBJECT_MISMATCH", "CROSSEDITION_TRIGGER_MISMATCH", "PQ_SLAVE_MISMATCH", "TOP_LEVEL_DDL_MISMATCH", "MULTI_PX_MISMATCH", "BIND_PEEKED_PQ_MISMATCH", "MV_REWRITE_MISMATCH", "ROLL_INVALID_MISMATCH", "OPTIMIZER_MODE_MISMATCH", "PX_MISMATCH", "MV_STALEOBJ_MISMATCH", "FLASHBACK_TABLE_MISMATCH", "LITREP_COMP_MISMATCH", "PLSQL_DEBUG", "LOAD_OPTIMIZER_STATS", "ACL_MISMATCH", "FLASHBACK_ARCHIVE_MISMATCH", "LOCK_USER_SCHEMA_FAILED", "REMOTE_MAPPING_MISMATCH", "LOAD_RUNTIME_HEAP_FAILED", "HASH_MATCH_FAILED", "PURGED_CURSOR", "BIND_LENGTH_UPGRADEABLE", "USE_FEEDBACK_STATS" from v$sql s join v$sql_cs_histogram h on (s.sql_id=h.sql_id and s.child_number=h.child_number and s.con_id=h.con_id ) join v$sql_shared_cursor shc on (shc.sql_id=h.sql_id and shc.child_number=h.child_number and s.con_id=shc.con_id ) where s.sql_id='&sql_id' ) pivot (sum(cnt) for (bucket) IN ('0' AS Bucket0,'1' AS Bucket1,'2' AS Bucket2)) ) unpivot (result FOR reason_type IN ("UNBOUND_CURSOR", "SQL_TYPE_MISMATCH", "OPTIMIZER_MISMATCH", "OUTLINE_MISMATCH", "STATS_ROW_MISMATCH", "LITERAL_MISMATCH", "FORCE_HARD_PARSE", "EXPLAIN_PLAN_CURSOR", "BUFFERED_DML_MISMATCH", "PDML_ENV_MISMATCH", "INST_DRTLD_MISMATCH", "SLAVE_QC_MISMATCH", "TYPECHECK_MISMATCH", "AUTH_CHECK_MISMATCH", "BIND_MISMATCH", "DESCRIBE_MISMATCH", "LANGUAGE_MISMATCH", "TRANSLATION_MISMATCH", "BIND_EQUIV_FAILURE", "INSUFF_PRIVS", "INSUFF_PRIVS_REM", "REMOTE_TRANS_MISMATCH", "LOGMINER_SESSION_MISMATCH", "INCOMP_LTRL_MISMATCH", "OVERLAP_TIME_MISMATCH", "EDITION_MISMATCH", "MV_QUERY_GEN_MISMATCH", "USER_BIND_PEEK_MISMATCH", "TYPCHK_DEP_MISMATCH", "NO_TRIGGER_MISMATCH", "FLASHBACK_CURSOR", "ANYDATA_TRANSFORMATION", "PDDL_ENV_MISMATCH", "TOP_LEVEL_RPI_CURSOR", "DIFFERENT_LONG_LENGTH", "LOGICAL_STANDBY_APPLY", "DIFF_CALL_DURN", "BIND_UACS_DIFF", "PLSQL_CMP_SWITCHS_DIFF", "CURSOR_PARTS_MISMATCH", "STB_OBJECT_MISMATCH", "CROSSEDITION_TRIGGER_MISMATCH", "PQ_SLAVE_MISMATCH", "TOP_LEVEL_DDL_MISMATCH", "MULTI_PX_MISMATCH", "BIND_PEEKED_PQ_MISMATCH", "MV_REWRITE_MISMATCH", "ROLL_INVALID_MISMATCH", "OPTIMIZER_MODE_MISMATCH", "PX_MISMATCH", "MV_STALEOBJ_MISMATCH", "FLASHBACK_TABLE_MISMATCH", "LITREP_COMP_MISMATCH", "PLSQL_DEBUG", "LOAD_OPTIMIZER_STATS", "ACL_MISMATCH", "FLASHBACK_ARCHIVE_MISMATCH", "LOCK_USER_SCHEMA_FAILED", "REMOTE_MAPPING_MISMATCH", "LOAD_RUNTIME_HEAP_FAILED", "HASH_MATCH_FAILED", "PURGED_CURSOR", "BIND_LENGTH_UPGRADEABLE", "USE_FEEDBACK_STATS")) where result='Y' order by child_number; |

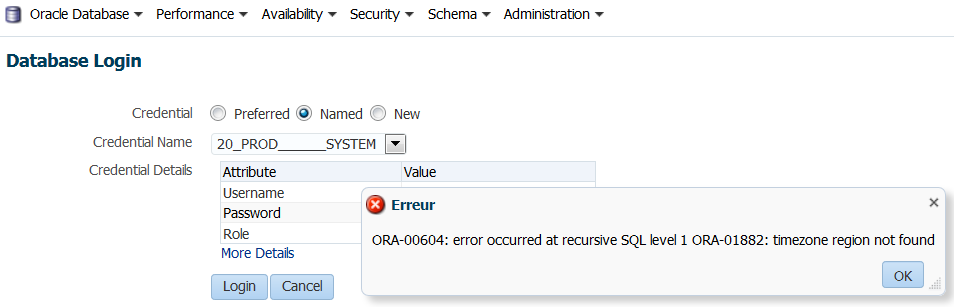

The result is something similar (in my case it has 26 child cursors):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 |

R E O B P S S I T E H N I N A D M S R I I E A Z T A W A I B A B V L R L CHILD_NUMBER PLAN_HASH_VALUE EXECS Gets/Exec Ela/Exec E E E E I BUCKET0 BUCKET1 BUCKET2 REASON_TYPE R ------------ --------------- ---------- ---------- ---------- - - - - - ---------- ---------- ---------- ----------------------------- - 0 2293695281 455 2466 14464 Y Y Y N 0 455 0 ROLL_INVALID_MISMATCH Y 2293695281 BIND_EQUIV_FAILURE Y 1 1690560038 99 13943 103012 Y Y Y N 0 99 0 ROLL_INVALID_MISMATCH Y 1690560038 BIND_EQUIV_FAILURE Y 2 3815006743 541 43090 230245 Y Y Y N 0 541 0 BIND_EQUIV_FAILURE Y 3815006743 ROLL_INVALID_MISMATCH Y 3 1483632464 251 4111 18940 Y Y Y N 49 202 0 ROLL_INVALID_MISMATCH Y 1483632464 BIND_EQUIV_FAILURE Y 4 3815006743 1152 42632 220730 Y Y Y N 0 1000 0 BIND_EQUIV_FAILURE Y 3815006743 ROLL_INVALID_MISMATCH Y 5 3922835573 150 39252 184176 Y Y Y N 0 150 0 ROLL_INVALID_MISMATCH Y 3922835573 BIND_EQUIV_FAILURE Y 6 767857637 3 4731 124707 Y Y Y N 0 3 0 ROLL_INVALID_MISMATCH Y 767857637 BIND_EQUIV_FAILURE Y 7 767857637 11 4739 71119 Y Y Y N 0 11 0 BIND_EQUIV_FAILURE Y 8 2800467281 1 307 249727 Y Y Y N 0 1 0 BIND_EQUIV_FAILURE Y 9 3123241890 536 2982 14428 Y Y Y N 6 530 0 ROLL_INVALID_MISMATCH Y 3123241890 BIND_EQUIV_FAILURE Y 10 3125518635 17 315 16492 Y Y Y N 16 1 0 ROLL_INVALID_MISMATCH Y 3125518635 BIND_EQUIV_FAILURE Y 11 2184442252 130 4686 40188 Y Y Y N 0 130 0 ROLL_INVALID_MISMATCH Y 2184442252 BIND_EQUIV_FAILURE Y 12 3815006743 553 42765 231391 Y Y Y N 0 553 0 ROLL_INVALID_MISMATCH Y 3815006743 BIND_EQUIV_FAILURE Y 13 1166983254 47 14193 111256 Y Y Y N 0 47 0 BIND_EQUIV_FAILURE Y 1166983254 ROLL_INVALID_MISMATCH Y 14 2307602173 2 38 45922 Y Y Y N 2 0 0 BIND_EQUIV_FAILURE Y 2307602173 ROLL_INVALID_MISMATCH Y 15 767857637 11 4304 59617 Y Y Y N 0 11 0 BIND_EQUIV_FAILURE Y 767857637 ROLL_INVALID_MISMATCH Y 16 3108045525 2 34591 176749 Y N N N 1 1 0 ROLL_INVALID_MISMATCH Y 3108045525 LOAD_OPTIMIZER_STATS Y 3108045525 BIND_EQUIV_FAILURE Y 17 3108045525 6 1794 33335 Y Y Y N 4 2 0 BIND_EQUIV_FAILURE Y 3108045525 ROLL_INVALID_MISMATCH Y 18 2440443365 470 2009 13361 Y Y Y N 0 470 0 ROLL_INVALID_MISMATCH Y 2440443365 BIND_EQUIV_FAILURE Y 19 4079924956 15 2032 19773 Y Y Y N 8 7 0 ROLL_INVALID_MISMATCH Y 4079924956 BIND_EQUIV_FAILURE Y 20 777919270 32 2675 18260 Y Y Y N 11 21 0 BIND_EQUIV_FAILURE Y 777919270 ROLL_INVALID_MISMATCH Y 21 1428146033 63 13929 111116 Y Y Y N 0 63 0 ROLL_INVALID_MISMATCH Y 1428146033 BIND_EQUIV_FAILURE Y 22 3815006743 218 43673 234642 Y Y Y N 0 218 0 BIND_EQUIV_FAILURE Y 3815006743 ROLL_INVALID_MISMATCH Y 23 277802667 1 62 99268 Y Y Y N 1 0 0 BIND_EQUIV_FAILURE Y 277802667 ROLL_INVALID_MISMATCH Y 24 3898025231 3 2364 111231 Y Y Y N 0 3 0 BIND_EQUIV_FAILURE Y 3898025231 ROLL_INVALID_MISMATCH Y 25 767857637 2 6495 169363 Y Y Y N 0 2 0 ROLL_INVALID_MISMATCH Y 767857637 BIND_EQUIV_FAILURE Y 26 3690167092 100 2998 20138 Y Y Y N 0 100 0 BIND_EQUIV_FAILURE Y 3690167092 ROLL_INVALID_MISMATCH Y |

It’s a quick way to get the relevant information in a single result.

Off course, if you need deeper details, you should consider something more powerful like SQLd360 from Mauro Pagano.

Credits: I’ve got the unpivot idea (and copied that part of the code) from this post by Timur Akhmadeev.

—

Ludo