I’ve realized these days that the great list of articles by Oracle Alchemist does not contain any articles describing a little more in depth common roles and Users.

I’ve found these ones by Pete Finnigan and Bobby Curtis:

http://www.petefinnigan.com/weblog/archives/00001366.htm

http://dbasolved.com/2013/06/29/common-user-vs-local-user-12c-edition/

http://www.petefinnigan.com/weblog/archives/00001370.htm

But I would like to investigate a little more.

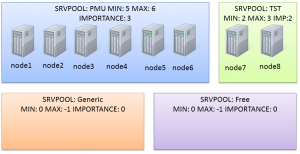

My test environment

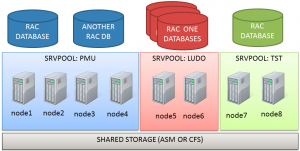

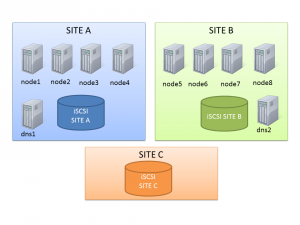

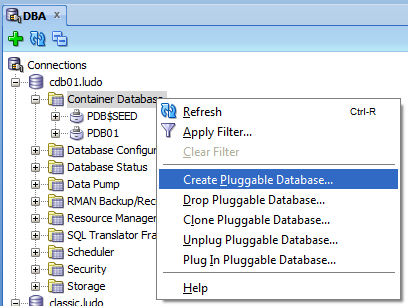

Just to give you an idea, I have two PDBs (HR and HR2), each containing an HR schema.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

SQL> alter session set container=CDB$ROOT; Session altered. SQL> select NAME, CON_ID from v$containers; NAME CON_ID ------------------------------ ---------- CDB$ROOT 1 PDB$SEED 2 HR 3 HR2 4 4 rows selected. SQL> r 1* select username, con_id from cdb_users where username ='HR' USERNAME CON_ID ------------------------------ ---------- HR 4 HR 3 2 rows selected. |

Creating the common user

As already pointed by the existing articles, I can’t create a common user into the root container without the c## prefix, unless I’m altering the hidden parameter _common_user_prefix.

|

1 2 3 4 5 |

SQL> create user goofy identified by pippo; create user goofy identified by pippo * ERROR at line 1: ORA-65096: invalid common user or role name |

so I specify the correct prefix, and it works:

|

1 2 3 4 5 6 7 |

SQL> create user C##GOOFY identified by pippo; User created. SQL> grant create session, alter session to c##goofy; Grant succeeded. |

The user is common, so it appears in all the containers, I can check it by querying CDB_USERS from the root container.

|

1 2 3 4 5 6 7 |

SQL> select username, con_id from cdb_users where username like '%GOOFY%'; USERNAME CON_ID -------------------------------------------------- ---------- C##GOOFY 1 C##GOOFY 3 C##GOOFY 4 |

Creating the local user

Then I create also a local user into the HR PDB.

|

1 2 3 4 5 6 7 8 9 10 11 |

SQL> alter session set container=HR; Session altered. SQL> create user goofy identified by pippo; User created. SQL> grant create session, alter session to goofy; Grant succeeded. |

CDB_USERS scope

From the PDB I see only the users in the PDB scope:

|

1 2 3 4 5 6 |

SQL> select username, con_id from cdb_users where username like '%GOOFY%'; USERNAME CON_ID -------------------------------------------------- ---------- C##GOOFY 3 GOOFY 3 |

If I change to the root, I see the users valid into all the containers:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

SQL> alter session set container=CDB$ROOT; Session altered. SQL> select username, con_id from cdb_users where username like '%GOOFY%'; USERNAME CON_ID -------------------------------------------------- ---------- C##GOOFY 1 C##GOOFY 3 C##GOOFY 4 GOOFY 3 |

Creating a common role

Do the roles obey to the same rules valid for the users?

|

1 2 3 4 5 6 7 8 9 |

SQL> alter session set container=CDB$ROOT; Session altered. SQL> create role country_reader; create role country_reader * ERROR at line 1: ORA-65096: invalid common user or role name |

Yes, they do! So, let’s create a common role with the C## prefix:

|

1 2 3 |

SQL> create role C##COUNTRY_ROLE container=ALL; Role created. |

It works, but if I try to create a common role into the root container only, I get an error:

|

1 2 3 4 5 |

SQL> create role C##REGIONS_ROLE container=current; create role C##REGIONS_ROLE container=current * ERROR at line 1: ORA-65094: invalid local user or role name |

And also if I try to create a local role into the root, I can’t:

|

1 2 3 4 5 |

SQL> create role REGIONS_ROLE container=current; create role REGIONS_ROLE container=current * ERROR at line 1: ORA-65049: creation of local user or role is not allowed in CDB$ROOT |

Note that the error ORA-65049 is different from the ORA-65096 that I’ve got before.

My conclusion is that the clause container of the create role and create user statements doesn’t make sense as you can ONLY create common users and roles into the root container and only local ones into the PDBs.

Creating a local role

Just as experiment, I’ve tried to see if I can create a local role with container=ALL. It doesn’t work:

|

1 2 3 4 5 6 7 8 9 |

SQL> alter session set container=hr; Session altered. SQL> create role REGION_ROLE container=ALL; create role REGION_ROLE container=ALL * ERROR at line 1: ORA-65050: Common DDLs only allowed in CDB$ROOT |

So I create the local role with container=current:

|

1 2 3 |

SQL> create role REGION_ROLE container=CURRENT; Role created. |

Now, from the PDB I can see the two roles I can access, whereas from the root I can see all the roles I’ve defined so far: the common role is available from all the PDBs, the local role only from the db where it has been defined, just like the users.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

SQL> select role, con_id from cdb_roles where role like '%COUNTRY%' or role like '%REGION%'; ROLE CON_ID ----------------------------------------------- ---------- REGION_ROLE 3 C##COUNTRY_ROLE 3 SQL> alter session set container=CDB$ROOT; Session altered. SQL> select role, con_id from cdb_roles where oracle_maintained='N'; ROLE CON_ID ----------------------------------------- ---------- C##COUNTRY_ROLE 1 C##COUNTRY_ROLE 4 C##COUNTRY_ROLE 3 REGION_ROLE 3 4 rows selected. |

Granting object privileges to the roles

From the root I can’t give grants on objects that reside in a PDB since I cannot see them: I need to connect to the PDB and give the grants from there:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

SQL> grant select on hr.countries to C##country_role; grant select on hr.countries to C##country_role * ERROR at line 1: ORA-00942: table or view does not exist SQL> alter session set container=hr; Session altered. SQL> grant select on hr.countries to C##COUNTRY_ROLE; Grant succeeded. SQL> grant select on hr.regions to REGION_ROLE; Grant succeeded. |

Now, if I query CDB_TAB_PRIVS from the root, I see that the grants are given at a local level (con_id=3 and common=N):

|

1 2 3 4 5 6 7 8 9 10 11 12 |

SQL> alter session set container=cdb$root; Session altered. SQL> select GRANTEE, OWNER, TABLE_NAME, PRIVILEGE, COMMON, CON_ID from cdb_tab_privs where owner='HR'; GRANTEE OWNER TABLE_NAME PRIVILEGE COM CON_ID -------------------- -------- ---------------- ------------- --- ---------- REGION_ROLE HR REGIONS SELECT NO 3 C##COUNTRY_ROLE HR COUNTRIES SELECT NO 3 2 rows selected. |

Granting common and local roles to commond and local users

From a PDB, I can grant local roles to local users or common users:

|

1 2 3 4 5 6 7 |

SQL> grant REGION_ROLE to goofy; Grant succeeded. SQL> grant REGION_ROLE to c##goofy; Grant succeeded. |

But I can’t grant a common role to a common user with container=all if I’m in a PDB:

|

1 2 3 4 5 |

SQL> grant C##COUNTRY_ROLE to c##goofy container=all; grant C##COUNTRY_ROLE to c##goofy container=all * ERROR at line 1: ORA-65050: Common DDLs only allowed in CDB$ROOT |

To grant the a common role to a common user I can decide either to:

- give the grant locally while connected to the PDB:

|

1 2 3 4 5 |

SQL> grant C##COUNTRY_ROLE to c##goofy container=current; Grant succeeded. SQL> |

- give the grant commonly while connected to the root:

|

1 2 3 4 5 6 7 |

SQL> alter session set container=cdb$root; Session altered. SQL> grant C##COUNTRY_ROLE to c##goofy container=all; Grant succeeded. |

I can also grant locally both roles and system privileges to common users while connected to the root container: in this case the privileges are applied to the root container only. Finally having the clause container finally starts to make sense:

|

1 2 3 4 5 6 7 |

SQL> grant C##COUNTRY_ROLE to c##goofy container=current; Grant succeeded. SQL> grant select any table to c##goofy container=current; Grant succeeded. |

Verifying the grants

Ok, I’ve given the grants and I’ve never verified if they work, so far.

Let’s try with the select any table privilege I’ve given in the last snippet. I expect C##GOOFY to select any table from the root container and only HR.COUNTRIES and HR.REGIONS on the HR PDB bacause they have been granted through the two roles.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

SQL> connect c##goofy/pippo; Connected. SQL> select count(*) from APEX_040200.WWV_FLOW_TABS; COUNT(*) ---------- 609 1 row selected. SQL> connect c##goofy/pippo@node4:1521/hr ERROR: ORA-01045: user C##GOOFY lacks CREATE SESSION privilege; logon denied Warning: You are no longer connected to ORACLE. |

What’s the mess? When I’ve created the user c##goofy, I’ve granted create and alter session without the container=all:

|

1 |

SQL> grant create session, alter session to c##goofy; |

According to the documentation, the grant command uses container=current by default (common=N):

|

1 2 3 4 5 6 7 8 9 |

SQL> select * from dba_sys_privs where grantee='C##GOOFY'; GRANTEE PRIVILEGE ADM COM ------------------------------ ---------------------------------------- --- --- C##GOOFY SELECT ANY TABLE NO NO C##GOOFY ALTER SESSION NO NO C##GOOFY CREATE SESSION NO NO 3 rows selected. |

So, I need to give the grants commonly to let c##goofy connect to all the PDBs:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

SQL> grant create session, alter session to c##goofy container=all; Grant succeeded. SQL> select * from dba_sys_privs where grantee='C##GOOFY'; GRANTEE PRIVILEGE ADM COM ------------------------------ ---------------------------------------- --- --- C##GOOFY SELECT ANY TABLE NO NO C##GOOFY ALTER SESSION NO NO C##GOOFY CREATE SESSION NO NO C##GOOFY ALTER SESSION NO YES C##GOOFY CREATE SESSION NO YES 5 rows selected. |

Now I see that the grants give two distinct permissions : one local and the other common.

If I revoke the grants without container clause, actually only the local one is revoked and the user can continue to login. To revoke the grants I would need to check and revoke both local and common privileges.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

SQL> revoke create session, alter session from c##goofy; Revoke succeeded. SQL> select * from dba_sys_privs where grantee='C##GOOFY'; GRANTEE PRIVILEGE ADM COM ------------------------------ ---------------------------------------- --- --- C##GOOFY SELECT ANY TABLE NO NO C##GOOFY ALTER SESSION NO YES C##GOOFY CREATE SESSION NO YES 3 rows selected. SQL> connect C##GOOFY/pippo; Connected. |

After the first revoke statement, I can still connect to HR and verify that my select any table privilege doesn’t apply to the PDB as it’s local to the root container:

|

1 2 3 4 5 6 7 |

SQL> connect C##GOOFY/pippo@node4:1521/hr Connected. SQL> select count(*) from hr.DEPARTMENTS; select count(*) from hr.DEPARTMENTS * ERROR at line 1: ORA-00942: table or view does not exist |

After that, I want to check the privileges given through the local and common roles.

I expect both users to select from hr.countries and hr.regions since they have been granted indirectly by the roles.

Let’s try the local user first:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

SQL> connect goofy/pippo@node4:1521/hr Connected. SQL> select count(*) from hr.regions; COUNT(*) ---------- 4 1 row selected. SQL> select count(*) from hr.countries; COUNT(*) ---------- 25 1 row selected. |

Yeah, it works as expected.

Now let’s try the common user:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

SQL> connect c##goofy/pippo@node4:1521/hr Connected. SQL> select count(*) from hr.regions; COUNT(*) ---------- 4 1 row selected. SQL> select count(*) from hr.countries; COUNT(*) ---------- 25 1 row selected. |

It also work, so everything is ok.

Common and local grants, why you must pay attention

During the example, I’ve granted the C##COUNTRY_ROLE many times: locally to PDB, locally to the ROOT, commonly. The result is that I’ve flooded the grant table with many entries:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

SQL> alter session set container=CDB$ROOT; Session altered. SQL> select grantee, GRANTED_ROLE, COMMON, CON_ID from cdb_role_privs where grantee like '%GOOFY' order by GRANTEE, GRANTED_ROLE; GRANTEE GRANTED_ROLE COM CON_ID ------------------------------ ------------------------------ --- ---------- C##GOOFY C##COUNTRY_ROLE YES 4 C##GOOFY C##COUNTRY_ROLE YES 3 C##GOOFY C##COUNTRY_ROLE YES 1 C##GOOFY C##COUNTRY_ROLE NO 1 C##GOOFY C##COUNTRY_ROLE NO 3 C##GOOFY REGION_ROLE NO 3 GOOFY C##COUNTRY_ROLE NO 3 GOOFY REGION_ROLE NO 3 8 rows selected. |

Let’s try to clean things: for sure I don’t need the grant local to the root:

|

1 2 3 |

SQL> revoke C##COUNTRY_ROLE from C##GOOFY container=current; Revoke succeeded. |

Then I can choose between revoking the common privilege or the local one. Let’s try to remove the local one:

|

1 2 3 4 5 6 7 |

SQL> alter session set container=hr; Session altered. SQL> revoke C##COUNTRY_ROLE from C##GOOFY container=current; Revoke succeeded. |

I’ve removed the local one, but I have still the common one (I’m connected to the PDB so the entries from the other containers are not displayed):

|

1 2 3 4 5 6 7 8 9 10 |

SQL> select grantee, GRANTED_ROLE, COMMON, CON_ID from cdb_role_privs where grantee like '%GOOFY' order by GRANTEE, GRANTED_ROLE; GRANTEE GRANTED_ROLE COM CON_ID ------------------------------ ------------------------------ --- ---------- C##GOOFY C##COUNTRY_ROLE YES 3 C##GOOFY REGION_ROLE NO 3 GOOFY C##COUNTRY_ROLE NO 3 GOOFY REGION_ROLE NO 3 4 rows selected. |

I still have access to the tables as expected:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

SQL> connect C##GOOFY/pippo@node4:1521/hr Connected. SQL> select count(*) from hr.countries; COUNT(*) ---------- 25 1 row selected. SQL> select count(*) from hr.regions; COUNT(*) ---------- 4 1 row selected. |

So, you must pay attention to a couple of things:

- When granting privileges from the root container, keep in mind that container=current is the default even when the grantee or the role granted are common.

- When revoking the grants with a Multitenant architecture, keep in mind that there is a scope and you may need more than one statement to actually remove the grant from all the scopes.

As always, I look for opinions and suggestions, feel free to comment!

🙂

—

Ludovico